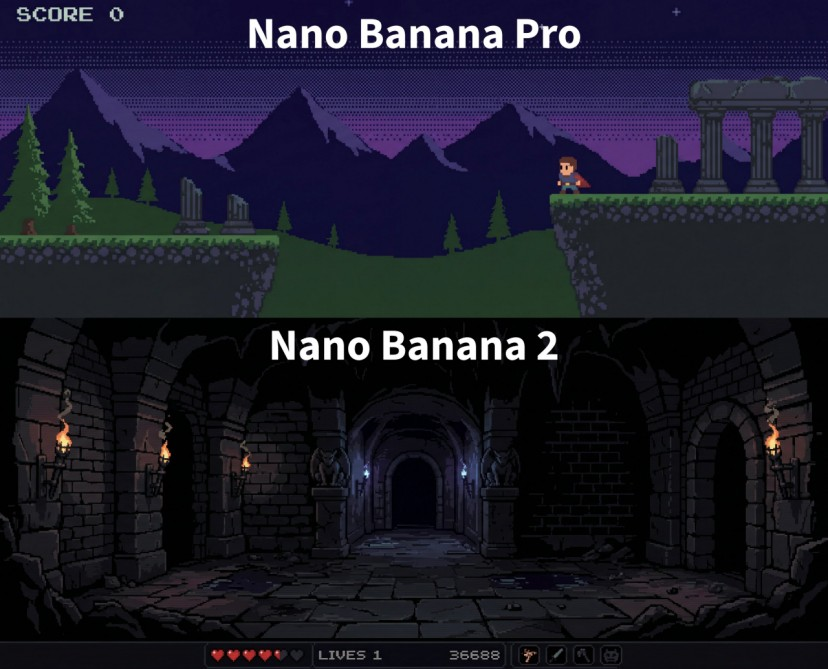

On February 26, 2026, Google DeepMind officially launched Nano Banana 2 (codenamed Gemini 3.1 Flash Image), dropping a bombshell in the image generation field. This is not just a routine upgrade, but signals a paradigm shift from "static pattern matching" to "dynamic knowledge-driven" AI image generation.

Core Breakthrough: Beyond Speed, It's About "Understanding"

Real-Time Web Grounding: Equipping the Image Model with a "Brain"

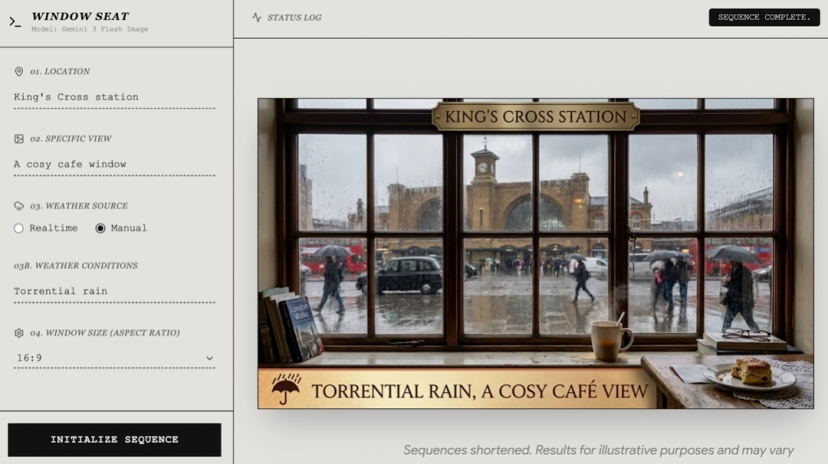

The most revolutionary aspect of Nano Banana 2 is its integration with Gemini's complete search capabilities. While traditional image models rely solely on static patterns from training data, Nano Banana 2 can retrieve web information in real-time, incorporating real-world geography, cultural context, and weather conditions into the generation process.

In the "Window Seat" demo, the model generates photorealistic window views based on user-specified locations and real-time weather data. For example, when prompted with "a cozy cafe window view of King's Cross Station in London, torrential rain," the AI knows the station's architecture and combines it with real-time weather to generate raindrop refractions on the glass.

Hierarchical Generation: Think First, Render Later

Nano Banana 2 adopts a hierarchical generation strategy, first completing scene understanding, composition planning, and physical relationship reasoning at lower resolutions, then upscaling to 2K or 4K through efficient pipelines. This "think first, render later" approach maintains Pro-level quality while compressing generation time to 4-6 seconds.

Precision Text Rendering: Goodbye to Gibberish

Text rendering has long been a weakness in AI image generation. Nano Banana 2 leverages Gemini's language model to understand text semantics while using image generation capabilities to understand visual presentation, achieving near-perfect text rendering. Whether for marketing posters, UI prototypes, or multilingual localization, text appears crisp and style-consistent.

Technical Highlights: Redefining Creative Workflows

Thought Signatures & Conversational Editing

Nano Banana 2 introduces "Thought Signatures" technology. When generating images, the model undergoes a series of internal reasoning steps; thought signatures are labels for each step. During multi-turn conversational editing, the model passes these signatures to remember previous composition logic, lighting relationships, and design intent, enabling coherent localized modifications.

Users can edit using natural language: "Change the background to sunset," "Make the person's shirt blue," or "Remove the tree on the left"—no technical jargon needed, as simple as talking to a professional designer.

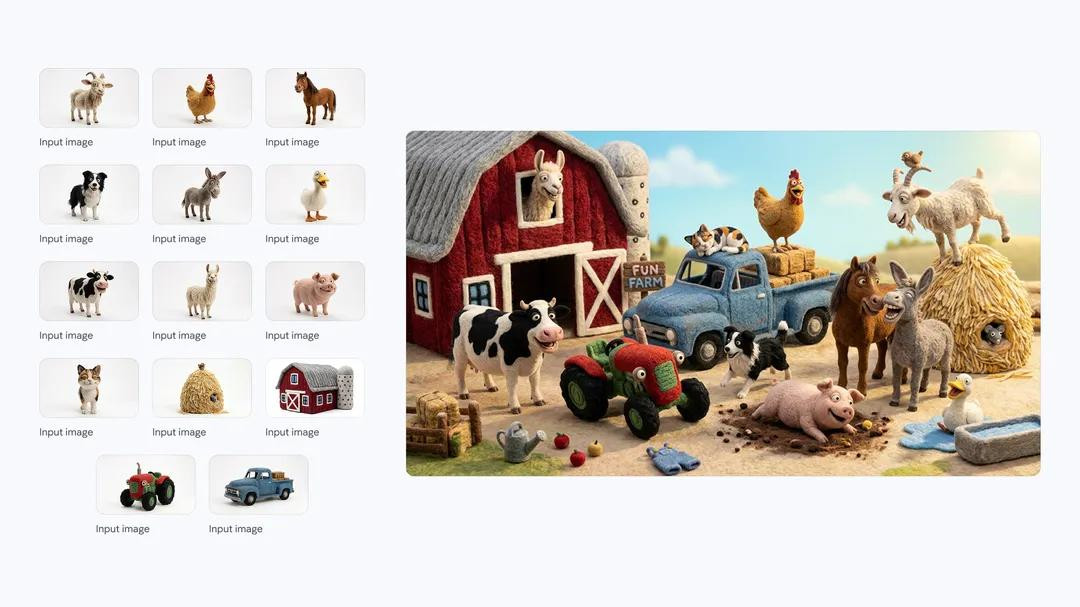

Superior Consistency Maintenance

In a single workflow, Nano Banana 2 can maintain consistency for up to 5 characters and 14 objects. This is crucial for storyboarding, comic serialization, and brand asset management. In an official demo, the model fused a banana with a dinosaur plush toy, creating a dinosaur with a banana body while perfectly preserving the material characteristics of both objects.

Application Scenarios: From Creativity to Production

| Scenario | Capability | Example |

|---|---|---|

| Infographic Generation | Transform complex logic into visual diagrams | Generate decision flowcharts showing reasoning processes, such as "walk vs. drive to the car wash" |

| Global Marketing Localization | Translate and visually adapt in-image text | "Global Ad Localizer" automatically translates ads into multiple languages while adjusting visual elements |

| Real-Time Landscape Generation | Combine real geography and weather data | "Window Seat" generates real-time window views of any location worldwide |

| Character Design & Narrative | Maintain character consistency across scenes | Generate continuous storyboards using different poses and outfits of the same character |

| E-Commerce Product Display | Batch generate high-quality product images | Reduce 48-hour photography cycles to minutes for generating 200 specification images |

Safety & Provenance: Responsible AI Innovation

As the boundary between AI-generated and real photographs blurs, Nano Banana 2 employs a dual-layer provenance system :

- SynthID Watermarking: Invisible watermarks embedded in images, already used for over 20 million verifications

- C2PA Content Credentials: A standard developed with industry partners like Adobe, Microsoft, and OpenAI, recording how and by whom the image was created

- This answers not just "was this AI-generated?" but provides complete context on "how was it created?"

Conclusion: The Second Half of Image Generation Has Begun

The launch of Nano Banana 2 marks the entry of image generation into the "world knowledge" competition stage . While competitors are still optimizing pixel quality, Google has shifted the battlefield to knowledge integration, real-time information, and cultural accuracy.

This model is no longer just a "drawing tool," but an intelligent assistant with visual expression capabilities—it understands physical laws, geographic features, and cultural contexts, capable of translating complex logical reasoning into intuitive visual language.

For creators, this means less random trial-and-error, more precise control, and more efficient iteration; for businesses, it means compressing high-cost visual production that once took days into minutes.

Nano Banana 2 is not just a new model, but a new benchmark for AI image generation.